Instructions and complementary information for the Lab Session: Normal Equation and Batch Gradient Descent Methods

Dear Student ,📚📝✨

This laboratory session is designed to improve your understanding of linear models by guiding you in developing a comprehensive technical report on the normal equation and gradient descent methods for multiparameter linear models. The report should rigorously explore these approaches, emphasizing their theoretical foundations, computational properties, and practical implications.

Theoretical overview

Normal Equation

The normal equation (NE) provides a closed-form solution for linear regression by directly computing the optimal model parameters. This approach is computationally efficient for small datasets but becomes impractical for large ones due to the high computational cost of matrix inversion.

Batch Gradient Descent and Its Variants

The gradient descent method is an iterative optimization algorithm that adjusts model parameters to minimize the cost function $J(\theta)$. It is particularly well-suited for large datasets, as it updates parameters incrementally. The three primary variants of gradient descent are:

-

Batch Gradient Descent (BGD): Updates parameters using the entire dataset in each iteration.

-

Mini-batch Gradient Descent (MBGD): Utilizes a subset of the data (mini-batch) for parameter updates.

-

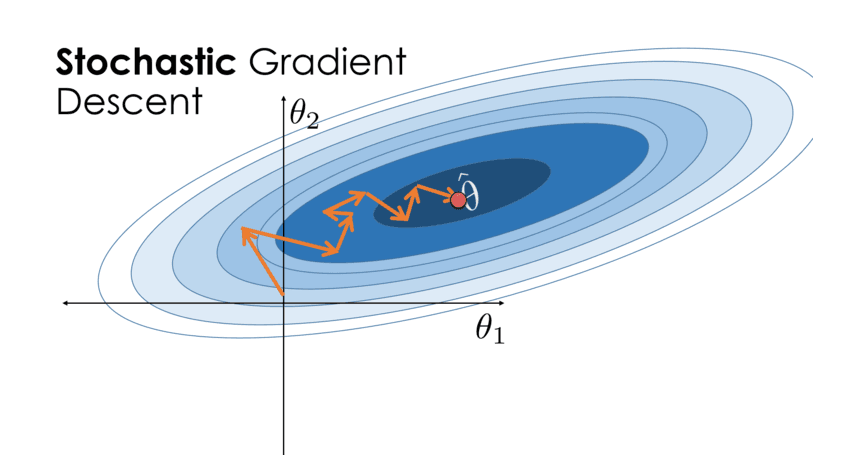

Stochastic Gradient Descent (SGD): Updates parameters using a single, randomly selected data instance per iteration.

These methods require careful selection of hyperparameters, particularly the learning rate, to ensure stable convergence and avoid local minima.

Complementary guide about Gradient Descent 🎥

The following video is the complete laboratory session that includes the normal equation and gradient descend methods. The video is included in this email below and its link is here, additionally the repository of the video can be reached at gitea:

Objectives and Required Tasks

Students are expected to conduct independent research, implement the discussed methods, and submit a structured report detailing their findings and results. Thus, the report development must consider the following points (points marked ☑️ are students' tasks) :

1. Theoretical Background

-

☑️ Provide a detailed theoretical discussion on the normal equation and gradient descent methods in your report's theoretical section.

-

Use LaTeX to formally present the mathematical derivations for:

-

☑️ The Normal Equation (NE).

-

☑️ The deterministic method of Batch Gradient Descent (BGD) applied to multiparameter linear models.

-

2. Dataset Utilization 💾

3. Implementation of the Normal Equation

-

☑️ Develop a multiparametric linear regression model based on the three datasets and analyze the computed model parameters for $\hat{y}_1= \theta_0+\theta_1 x_1$.

-

☑️ Extend your model to $\hat{y}_2= \theta_0+\theta_1 x_1+\theta_2 x_2$.

- ☑️ Verify model performance and values for both models $\hat{y}_1$ and $\hat{y}_2$.

4. Implementation of Gradient Descent Methods

-

☑️ Implement Batch Gradient Descent (BGD) for the given three datasets to obtain the model parameters.

-

☑️ Implement Mini-batch Gradient Descent (MBGD):

-

Experiment with various mini-batch sizes (e.g., 5-10, 20, and 30).

-

-

☑️ Implement Stochastic Gradient Descent (SGD):

-

Select a single random instance per iteration for parameter updates.

-

-

☑️ Track the Mean Squared Error (MSE or $J$) performance throughout the iterations.

-

☑️ Implement a plotting feature to visualize the progression of the $MSE(J)$.

-

Adjust the scaling of axes to highlight parameter evolution across iterations.

5. Visualization of Parameter Evolution (only for two-parameter model)

-

☑️ Determine optimal hyperparameters for each method.

-

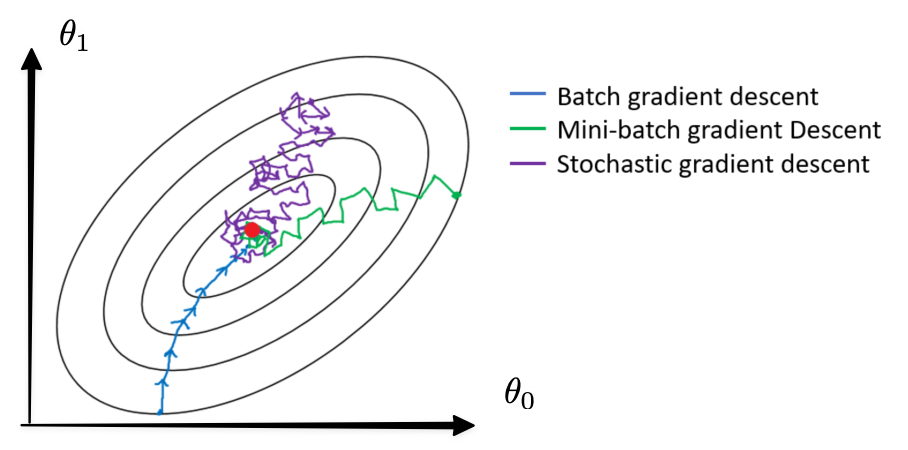

☑️ Generate parameter trajectory plots with:

-

x-axis as $\theta_0$

-

y-axis a $\theta_1$

-

Analyze the convergence behavior of the models during each iteration; try to use the same number of iterations to compare the evolution of three methods and their final values.

- Try to obtain the below plot which includes the model parameter ($\theta_0$ vs $\theta_1$) evolution per method —batch, mini, stochastic— with a projection of the $MSE$

-

6. Conclusions ☑️

-

Compare the efficiency, accuracy, and computational complexity of the normal equation and gradient descent methods.

-

Discuss the impact of dataset size, hyperparameters, and MSE behavior on methods performance.

Submission Guidelines

1. Report must be included in the Jupyter file

2. Submission Deadlines 📅

-

6th of March 2026

3. Quality Assurance Before Submission

-

Verify the completeness and correctness of responses.

-

Proofread to eliminate spelling and grammatical errors.

-

Ensure proper implementation and adherence to formatting guidelines.

Recommendations for Effective Completion

-

Organized Workflow 📂: Maintain a structured repository for assignments and create backups.

-

Clarification Requests: Address any uncertainties with the instructor well before the deadline.

-

Early Preparation ⏰: Commence work promptly to allow sufficient time for research and troubleshooting.

-

Regular Updates 📩: Monitor email correspondence for potential modifications to the requirements.

Happy coding!

Gerardo Marx,

Lecturer of the Artificial Intelligence and Automation Course,

gerardo.cc@morelia.tecnm.mx